Key Takeaways

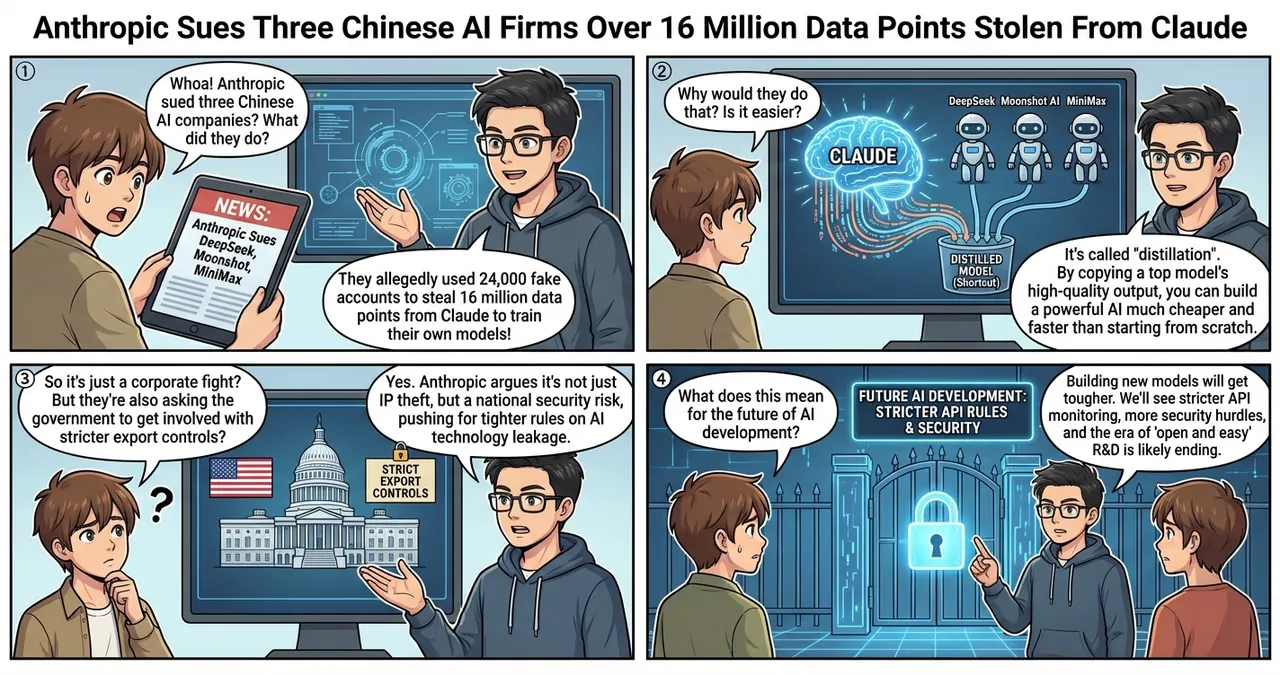

- Anthropic has filed a lawsuit against DeepSeek, Moonshot AI, and MiniMax.

- The companies allegedly used 24,000 fake accounts to illegally extract 16 million data points from Claude.

- Anthropic is strongly urging the U.S. government to strengthen export controls to prevent technology leakage.

The Details

Systematic Data Extraction

According to Anthropic’s announcement, the three Chinese AI startups (DeepSeek, Moonshot AI, MiniMax) are suspected of systematically incorporating Claude’s knowledge into their own models. Specifically, they operated massive numbers of fake accounts, continuously sending prompts to Claude and reusing its responses as training data — a practice known as “distillation.”

The Scale: 16 Million Data Points

What’s particularly striking about this case is its scale. The extracted data reached 16 million points, believed to have been used to mimic the behavior and logical reasoning processes of a high-performance model. Anthropic maintains that this not only violates terms of service but constitutes unfair appropriation of intellectual property built with enormous R&D investment, leading them to take legal action.

Government Lobbying

Simultaneously with the court filing, Anthropic is requesting government agencies including the U.S. Department of Commerce to implement stricter export controls, including restrictions on AI model access. This suggests the matter extends beyond a simple corporate dispute into the realm of international technological supremacy.

What Makes This Impressive?

This news highlights how a model’s “output” itself has become an extremely valuable training resource for competitors.

| Category | Traditional Training Data | The Disputed Method (Distillation) |

|---|---|---|

| Data source | Public web information, books, etc. | Responses generated by high-performance AI models |

| Advantage | Can be collected in large quantities at low cost | Efficiently learn from high-quality “correct examples” |

| Risk | Copyright and accuracy issues | Original model’s performance copied at low cost |

| Legal issue | Web scraping legality | ToS violation and intellectual property infringement |

Developing a high-performance model from scratch requires investments of hundreds of millions of dollars, but extracting data from another company’s model and using it for training could potentially achieve comparable performance at a fraction of the cost. The extremely difficult question of how far this “technological shortcut” should be tolerated has been squarely raised.

Impact on the Industry

This situation is not someone else’s problem for engineers and companies worldwide.

First, compliance with terms of service in API-based development will be enforced more strictly. While terms prohibiting “using model outputs for training other models” have been standard, enhanced monitoring means careful handling will be required even for research purposes.

Security changes are also expected. Increased restrictions on high-volume API requests and stricter account verification processes may affect development speed even for legitimate use cases.

Points of Concern

A key question in this lawsuit is how far technical proof is possible. Definitively establishing that generated text “was extracted from a specific model” requires advanced forensic technology.

Additionally, there are concerns that strengthened regulations could hinder the culture of open R&D. The walls built to prevent technology leakage could potentially slow global technological development — a matter requiring careful debate.

Action Items for Developers

- Re-read platform terms of service: Pay particular attention to “output data usage restrictions” and verify your development processes don’t violate them.

- Increase API usage transparency: Maintaining traceability of which models your organization uses and for what purposes supports risk management.

- Consider protections for your own data: If you’re publishing or API-ifying proprietary datasets, now is the time to start considering anti-imitation measures.

Summary

This lawsuit may become a historic turning point in defining and protecting intellectual property in AI development. In an era where model outputs themselves hold asset value, companies face new challenges in balancing technological innovation with data protection. The legal battles ahead and regulatory developments across nations will shape the rules for next-generation AI development.

![[Weekly AI Model Updates] Top 5 News to Watch (2/22 - 3/1)](https://4koma-news.com/images/posts/en/80-weekly-ai-news-model-2026-03-01-blog.webp)

![[Weekly AI Industry & Trends] Top 5 News to Watch (3/1 - 3/8)](https://4koma-news.com/images/posts/en/89-weekly-ai-news-other-2026-03-08-blog.webp)

![[Weekly AI Model Updates] Top 5 News to Watch (3/1 - 3/8)](https://4koma-news.com/images/posts/en/88-weekly-ai-news-model-2026-03-08-blog.webp)

![[Anthropic Claude] Anthropic's Claude AI Recovers After Major Global System Outage](https://4koma-news.com/images/posts/en/82-2026-03-02-claude-system-outage-blog.webp)