Key Takeaways

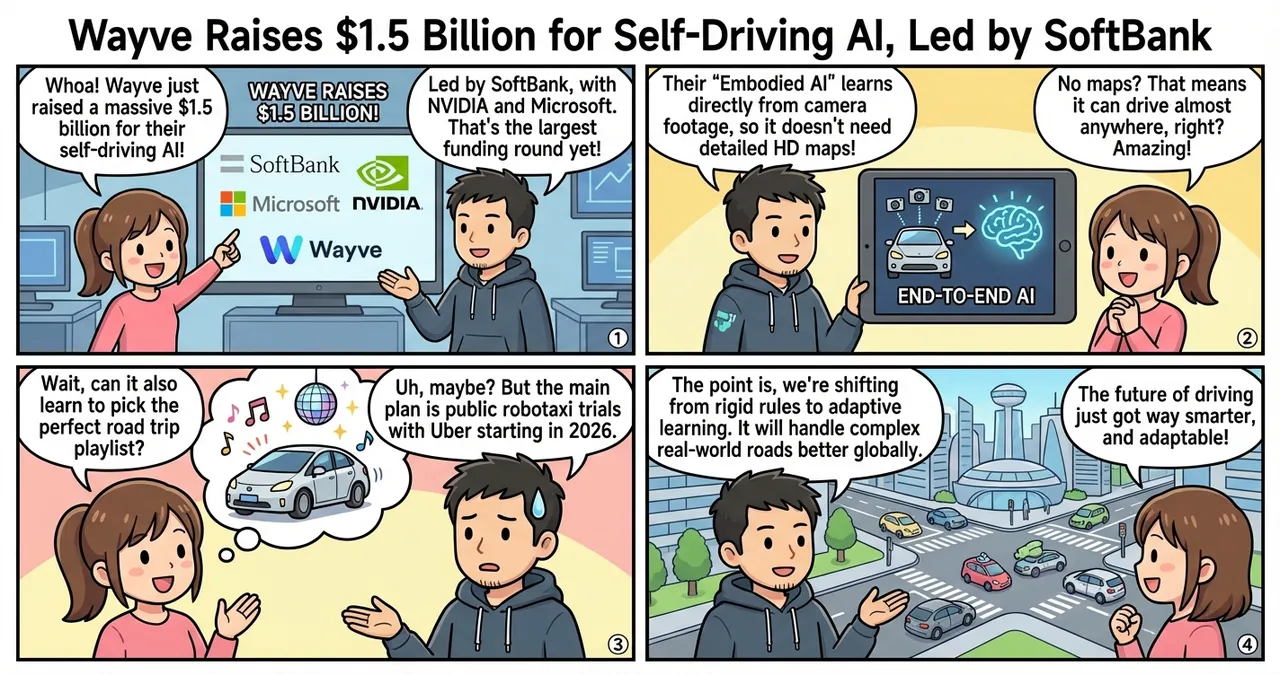

- Wayve has raised $1.5 billion (approximately ¥230 billion) from SoftBank, NVIDIA, Microsoft, and others — the largest funding round in its history.

- The company is accelerating commercialization of its “end-to-end AI” that learns to drive directly from camera footage without relying on HD maps.

- Through a strategic partnership with Uber, Wayve has laid out a concrete roadmap to begin public road robotaxi trials in 2026.

The Details

London-based self-driving startup Wayve has completed a $1.5 billion Series D funding round. The investment was led by SoftBank Vision Fund 2, with participation from existing investor Microsoft and new investor NVIDIA.

Wayve advocates for an approach called “AV 2.0” that fundamentally differs from conventional self-driving technology. While traditional systems rely on extensive rule programming and high-definition maps (HD maps), Wayve’s technology is built around “Embodied AI.” This means the vehicle captures its surroundings through cameras and other sensors, and a neural network directly determines “accelerator, brake, and steering” operations.

With this funding, Wayve will accelerate technology development and plans to begin robotaxi trial operations using Uber’s platform in 2026, aiming to achieve a versatile self-driving service not confined to specific regions.

What Makes This Impressive?

Wayve’s technical advantage lies in its overwhelming “versatility” and “learning efficiency.” The differences become clear when compared to conventional approaches:

| Comparison | Traditional Approach (AV 1.0) | Wayve’s Approach (AV 2.0) |

|---|---|---|

| Decision logic | Thousands of human-written rules | Learning-based via large-scale models |

| Map dependency | HD maps required | No maps needed (real-time visual data) |

| Scalability | Only in pre-mapped specific areas | Adaptable to roads worldwide |

| System architecture | Complex modular design | Simple end-to-end design |

With traditional methods, detailed maps had to be created in advance for each new city, requiring enormous costs and time. Wayve’s system can assess situations from visual information like a human driver even on roads it’s never traveled, dramatically reducing deployment costs while enabling worldwide scalability.

Impact on the Industry

This news carries significant implications for automakers and AI engineers globally. With SoftBank taking a leading role, we can expect field trials and partnerships with domestic manufacturers in various markets to accelerate.

Many road environments feature narrow alleys, complex intersections, and diverse pedestrian movements — scenarios that are difficult to handle with rule-based programming. Wayve’s end-to-end learning model represents a strong solution for adapting to these unique environments. Additionally, demand for advanced edge-side inference processing will drive further growth in automotive semiconductor and embedded software development.

Points of Concern

While technological breakthroughs are expected, objective challenges remain. The biggest concern is “accountability (the black box problem).” End-to-end AI makes it difficult for humans to logically analyze why certain decisions were made, creating challenges for determining liability and investigating causes when accidents occur.

Furthermore, obtaining regulatory approval for the 2026 Uber trial is a point to watch. The high degree of freedom from not relying on maps could conversely be interpreted as “low predictability,” requiring the development of new evaluation criteria to publicly demonstrate safety.

Next Steps for Following This Story

- Review Wayve’s published technical papers and demo videos, including “GAIA-1 (generative AI-based driving scene creation),” to understand its visual processing capabilities.

- Organize foundational knowledge on the latest machine learning trends, including end-to-end learning (E2E) and world models.

- Analyze where Wayve fits within SoftBank Group’s investment strategy for the autonomous driving ecosystem.

Summary

With massive funding and powerful partnerships, Wayve has made a significant leap from research stage to commercialization phase. How its map-free “Embodied AI” approach performs through the 2026 Uber partnership will be closely watched. We are witnessing a fundamental transition in self-driving standards — from rules to learning.

![[Weekly AI Industry & Trends] Top 5 News to Watch (3/8 - 3/15)](https://4koma-news.com/images/posts/en/91-weekly-ai-news-other-2026-03-15-blog.webp)

![[OpenAI] OpenAI Raises $110B as Valuation Soars to $730B](https://4koma-news.com/images/posts/en/72-2026-02-28-openai-funding-valuation-blog.webp)

![[Export Controls] US Commerce Department Proposes Stricter Rules for AI Chip Exports](https://4koma-news.com/images/posts/en/89-2026-03-06-us-ai-chip-export-blog.webp)

![[Gemini 3.1 Flash-Lite] Google’s New Ultra-Fast, Low-Cost Model](https://4koma-news.com/images/posts/en/85-2026-03-03-google-gemini-flash-lite-blog.webp)