Key Takeaways

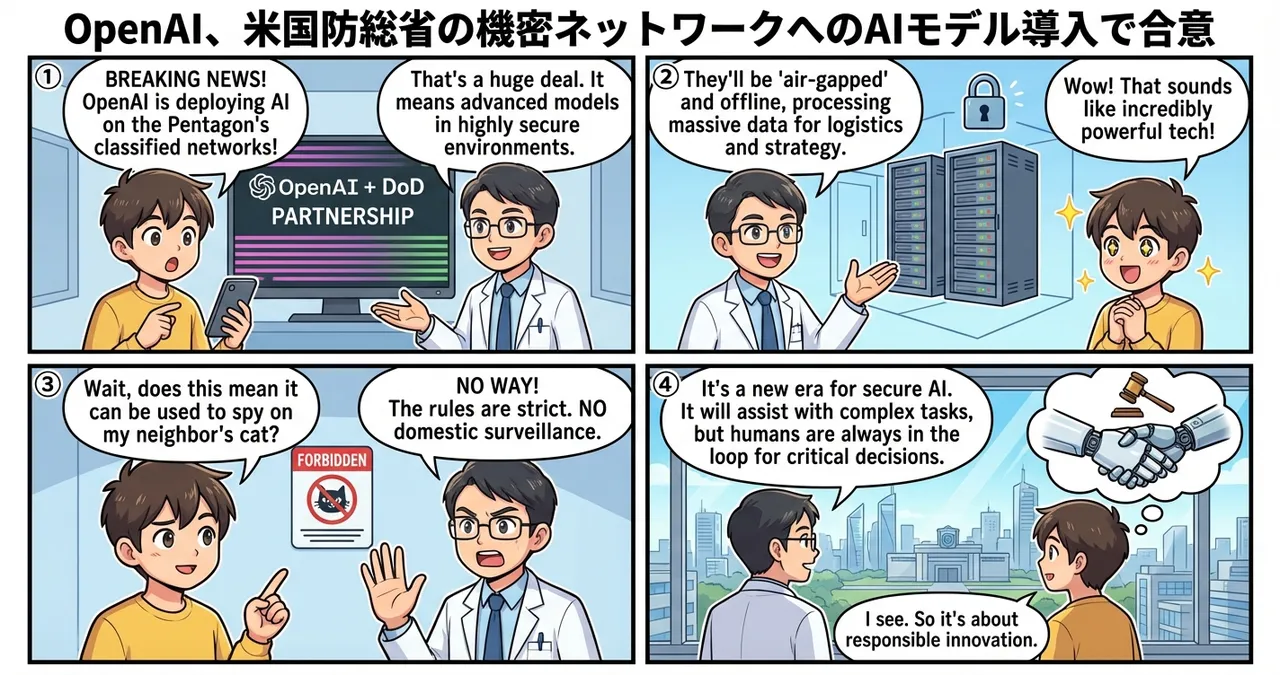

- OpenAI has secured a landmark agreement to deploy its advanced AI models onto the classified networks of the US Department of Defense.

- The partnership includes strict prohibitions against domestic surveillance and mandates that humans remain responsible for any use of force.

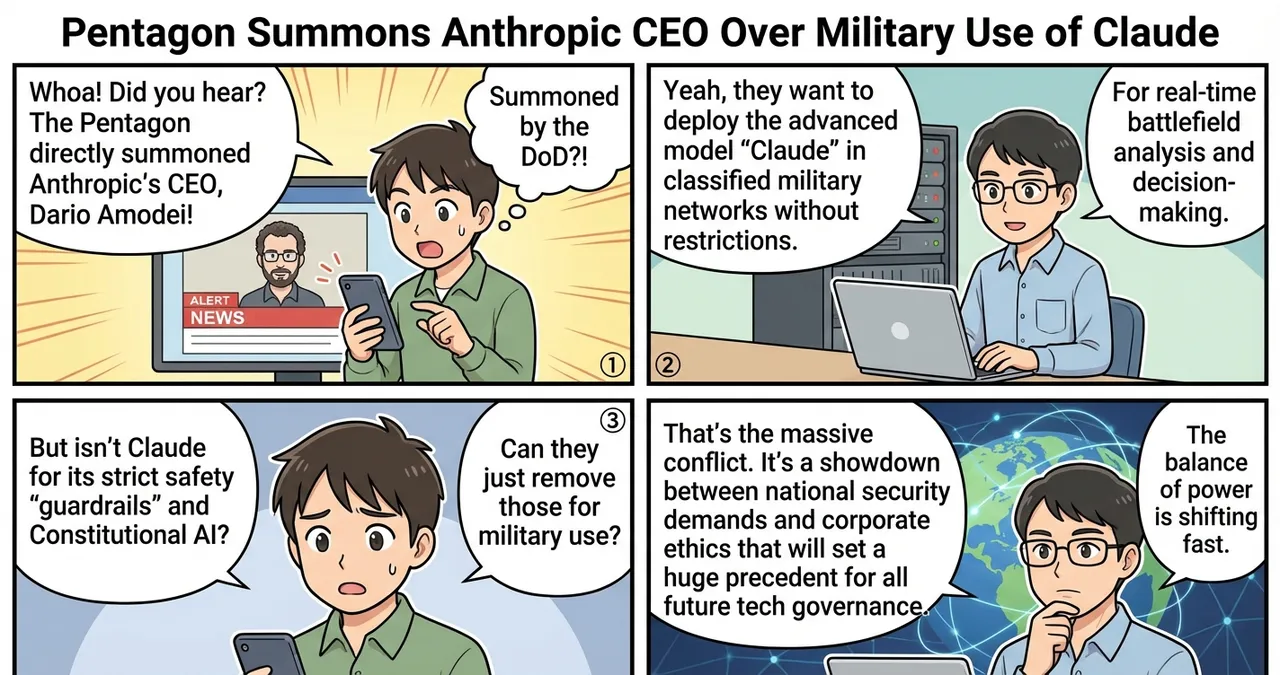

- This development follows the reported exclusion of Anthropic from similar high-level defense collaborations, signaling a shift in the competitive landscape for government contracts.

Detailed Breakdown

Strategic Partnership with the Pentagon

OpenAI has finalized a deal to integrate its large language models into the most secure environments managed by the US Department of Defense (DoD). This move marks a significant evolution in the relationship between Silicon Valley and the military establishment. Unlike previous commercial deployments, these models will operate within air-gapped or highly restricted networks intended for sensitive national security operations.

Ethical Guardrails and Conditions

The agreement is contingent upon several critical conditions designed to address public and ethical concerns regarding military AI. First, the models are strictly forbidden from being used for domestic surveillance of US citizens. Second, the “human-in-the-loop” principle is codified within the contract, ensuring that any decision involving the use of kinetic force or lethal action remains the sole responsibility of a human operator, not an autonomous system.

The Exclusion of Competitors

The timing of this announcement is notable, occurring shortly after reports surfaced that Anthropic, a major rival in the AI space, was sidelined from similar defense initiatives. This suggests a narrowing of the field for high-stakes government AI integration, with OpenAI positioning itself as the primary partner for the current administration’s defense modernization efforts.

Why Is This Significant?

The deployment of AI on classified networks represents a technical and logistical leap from standard cloud-based AI services. The following table highlights the differences between standard commercial deployment and this new defense-centric approach.

| Feature | Standard Commercial AI | Classified Defense AI |

|---|---|---|

| Connectivity | Public Cloud / Internet-dependent | Air-gapped / Local Classified Networks |

| Data Privacy | Subject to standard TOS | Sovereign data control; no external telemetry |

| Primary Goal | Productivity and creativity | Intelligence analysis and strategic logistics |

| Governance | Corporate policy | Federal law and military doctrine |

This transition signifies that AI is no longer viewed merely as a productivity tool but as critical national infrastructure. By moving models into classified environments, the DoD can process massive datasets without the risk of data leaks to the public internet or foreign adversaries.

Impact on the Tech Industry

The agreement sets a precedent for how “dual-use” technology companies interact with government entities. For years, many tech firms avoided direct military involvement due to employee pushback and ethical concerns. OpenAI’s move signals a normalization of these partnerships, which will likely encourage other startups to seek similar high-value government contracts.

Engineers and researchers may see a shift in project funding and focus, with an increasing emphasis on security-hardened AI and offline model performance. This could lead to a bifurcation of the industry: companies that specialize in public-facing consumer AI and those that focus on “defense-grade” AI infrastructure.

Points to Consider

While the deal includes ethical safeguards, the practical implementation of these rules in a classified setting remains difficult for external parties to verify. The lack of transparency inherent in military networks means that public oversight will be limited.

Furthermore, the exclusion of other major players like Anthropic raises questions about market competition and whether a single company’s architecture should underpin such critical national security functions. There is also the ongoing challenge of model “hallucinations” in high-stakes environments, where an error in data interpretation could have severe geopolitical consequences.

Try It Yourself

While the classified models are not accessible to the public, individuals and organizations can take the following steps to understand the evolving landscape of AI and defense:

- Monitor Government Procurement: Visit SAM.gov to track official Department of Defense solicitations related to artificial intelligence and machine learning.

- Review OpenAI’s Usage Policies: Check the “Usage Policies” page on the OpenAI website to see how they define “Military and Warfare” use cases as the company’s stance evolves.

- Research Secure AI Frameworks: Explore documentation on “Air-gapped AI” and “Edge Computing” to understand the technical challenges of running large models without internet access.

Summary

OpenAI’s integration into the Pentagon’s classified networks marks a turning point for the AI industry, bridging the gap between commercial innovation and national defense. By agreeing to strict ethical boundaries regarding surveillance and lethal force, OpenAI has secured a dominant position in the government sector. The future of this partnership will likely determine the standards for how autonomous systems are governed in global security contexts.

Why It Matters

This news confirms that AI has become a central pillar of national security strategy. The move from public cloud to classified military networks indicates that the technology is now considered mature enough for mission-critical defense operations, fundamentally changing the relationship between the tech industry and the state.

Primary Sources

Glossary

- Classified Network: A secure communication infrastructure used by government agencies to handle sensitive information, isolated from the public internet.

- Air-gapping: A security measure that involves isolating a computer or network from any external connections, including the internet, to prevent unauthorized access.

- Human-in-the-loop: A model of automation where a human is required to intervene or provide approval before a system performs a specific action.

![[OpenAI] OpenAI Amends Pentagon Contract to Explicitly Ban Domestic Surveillance](https://4koma-news.com/images/posts/en/84-2026-03-03-openai-dod-surveillance-ban-blog.webp)

![[Cybersecurity] OpenAI Launches Codex Security: An Autonomous Agent for Vulnerability Management](https://4koma-news.com/images/posts/en/88-2026-03-07-openai-codex-security-blog.webp)

![[Ethical AI] Google and OpenAI Staff Back Anthropic’s Stance Against Military AI](https://4koma-news.com/images/posts/en/77-2026-03-01-google-openai-military-letter-blog.webp)