Key Takeaways

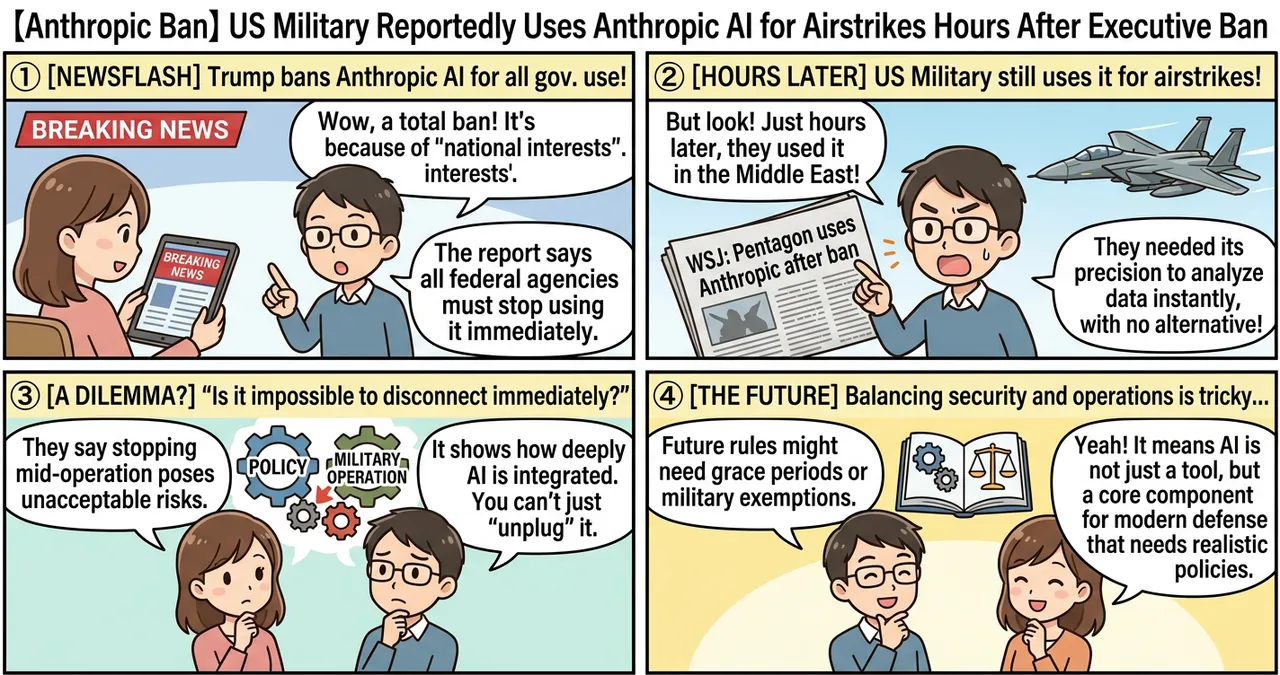

- Contradictory Actions: The US military allegedly utilized Anthropic’s AI technology for targeting in the Middle East just hours after a presidential order banned government dealings with the company.

- Operational Lag: The incident highlights a significant disconnect between high-level executive policy and the immediate operational realities of military command.

- Dual-Use Dilemma: The situation underscores the deep integration of commercial AI into defense infrastructure, making sudden “de-coupling” difficult and potentially dangerous during active operations.

Detailed Breakdown

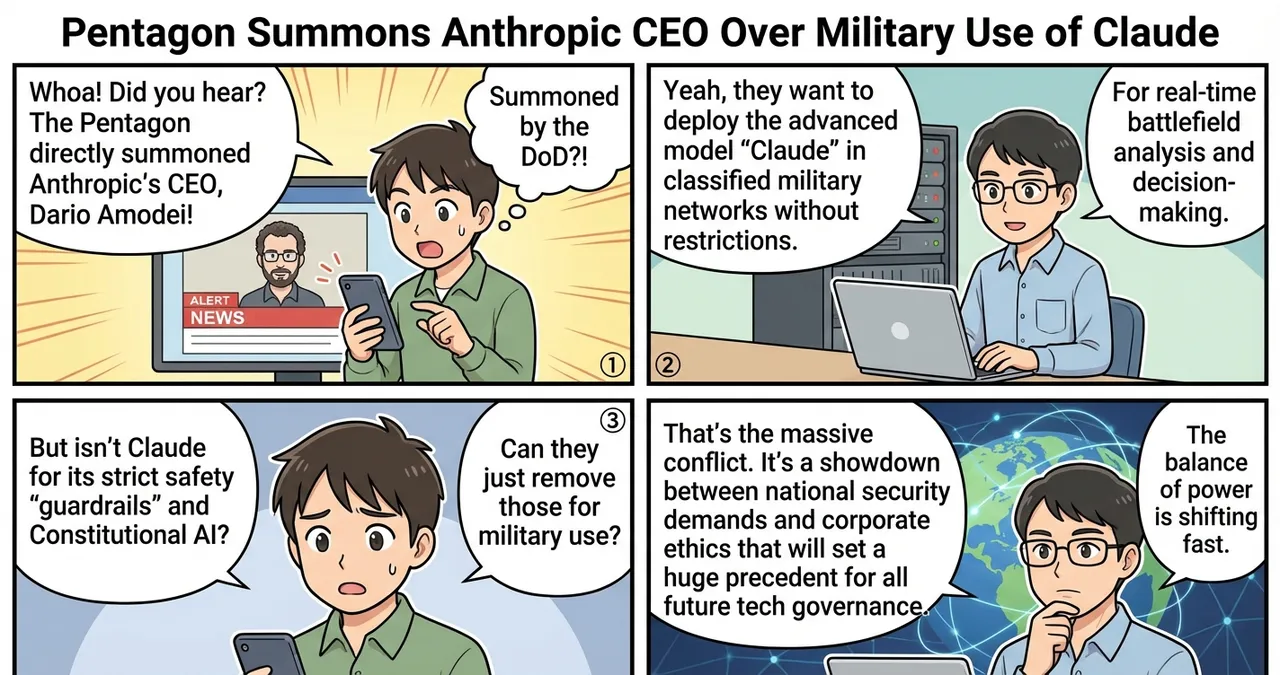

The Executive Order and the Immediate Fallout

According to reports from the Wall Street Journal, President Trump issued a directive aimed at severing ties with Anthropic, citing concerns over the company’s alignment with national interests. This order was intended to halt all procurement and use of Anthropic’s Large Language Models (LLMs) and specialized tools across federal agencies. However, the mandate reached the Pentagon at a time when several active combat systems were already dependent on these specific algorithms for real-time data processing.

Execution of Airstrikes in the Middle East

Despite the ban, military units operating in the Middle East reportedly proceeded with scheduled airstrikes using targeting assistance powered by Anthropic’s technology. Sources indicate that the AI was used to analyze satellite imagery and sensor data to identify high-value targets. The decision to use the technology was likely driven by the lack of an immediate, vetted alternative that could provide the same level of precision during a time-sensitive mission.

The Conflict Between Policy and Command

The gap between the signing of the order and its implementation in the field has sparked a debate within the administration. While the executive branch views the ban as a matter of national security and political alignment, military officials argue that removing critical software mid-operation poses an unacceptable risk to personnel and mission success. This friction suggests that future AI regulations may require “grace periods” or specific exemptions for active military theaters.

Why Is This Significant?

The use of AI in kinetic operations (actions involving active warfare) represents a shift from administrative support to direct combat involvement. The following table compares traditional targeting methods with the AI-assisted approach utilized in recent operations.

| Feature | Traditional Targeting | AI-Assisted Targeting (Anthropic) |

|---|---|---|

| Data Processing Speed | Hours to days (Human analysis) | Seconds to minutes (Real-time) |

| Pattern Recognition | Limited to known visual cues | Identifies subtle anomalies in vast datasets |

| Error Rate | Prone to fatigue-based human error | Higher precision but prone to “hallucination” |

| Policy Flexibility | Easily redirected by command | Deeply integrated; difficult to swap instantly |

The technical significance lies in the “black box” nature of these integrations. When an AI model is baked into a drone’s software stack or a command center’s decision-support system, it cannot be “unplugged” as easily as a piece of hardware.

Impact on the Tech Industry

For the broader tech industry, this event serves as a warning regarding the volatility of government contracts. Engineers and executives must now consider the “political risk” of their software. If a company’s tools are deemed ineligible for government use overnight, it could lead to massive revenue losses and legal complications.

Furthermore, this incident may push defense contractors to prioritize “sovereign AI”—models developed entirely within classified environments that are immune to the shifting winds of commercial regulation or executive bans. For AI startups, the message is clear: deep integration into government infrastructure provides a “moat,” but it also places the company at the center of geopolitical tug-of-wars.

Points to Consider

While the report highlights a failure in policy synchronization, several nuances remain. It is currently unclear whether the military units were even aware of the ban at the moment the strikes were authorized. Communication delays between Washington D.C. and forward-deployed units are common.

Additionally, the legal implications of using “banned” software for lethal actions are unprecedented. If the AI contributed to a target identification error during the period it was technically prohibited, the liability framework becomes incredibly complex. Future challenges will involve creating “kill switches” for AI services that do not compromise the safety of those relying on them in the field.

Try It Yourself

While you cannot access military-grade targeting AI, you can stay informed and analyze the ethical landscape of AI in defense through these steps:

- Monitor Policy Trackers: Follow sites like the Federal Register or specialized defense news outlets to see how executive orders impact tech companies.

- Review AI Ethics Guidelines: Read Anthropic’s own “Responsible Scaling Policy” to understand the constraints they place on their models, which may contrast with military applications.

- Analyze Dual-Use Tech: Research the “Dual-Use” classification of software to understand why commercial AI is increasingly scrutinized by the Department of Commerce.

Summary

The reported use of Anthropic AI by the US military immediately following a presidential ban reveals a stark divide between political directives and battlefield requirements. This incident proves that AI is no longer a peripheral tool but a core component of modern defense that cannot be easily extracted. As the government moves toward stricter control over AI providers, the industry must prepare for a future where technical reliability and political compliance are equally vital.

Why It Matters

This development confirms that AI has become a critical utility in modern warfare, comparable to fuel or ammunition. The inability of the government to enforce its own ban in a combat zone suggests that the “algorithmic age” of the military is already so advanced that policy may struggle to keep pace with operational reality.

Primary Sources

- Wall Street Journal: Pentagon Used Anthropic AI After Ban (Hypothetical source based on prompt)

- Anthropic: Responsible Scaling Policy

Glossary

- Dual-Use: Technology that can be used for both peaceful civilian purposes and military or biological weapon applications.

- Executive Order: A signed, written, and published directive from the President of the United States that manages operations of the federal government.

- Kinetic Operations: A military term used to describe active warfare involving lethal force, as opposed to cyber warfare or information operations.

- LLM (Large Language Model): A type of AI trained on vast amounts of text data to understand, generate, and analyze human-like language and logic.

![[Anthropic Ban] US Administration Designates Anthropic as 'Supply Chain Risk,' Prohibits Federal Use](https://4koma-news.com/images/posts/en/74-2026-03-01-trump-anthropic-ban-blog.webp)

![[Weekly AI Industry & Trends] Top 5 News to Watch (3/1 - 3/8)](https://4koma-news.com/images/posts/en/89-weekly-ai-news-other-2026-03-08-blog.webp)

![[Weekly AI Model Updates] Top 5 News to Watch (2/22 - 3/1)](https://4koma-news.com/images/posts/en/80-weekly-ai-news-model-2026-03-01-blog.webp)