Key Takeaways

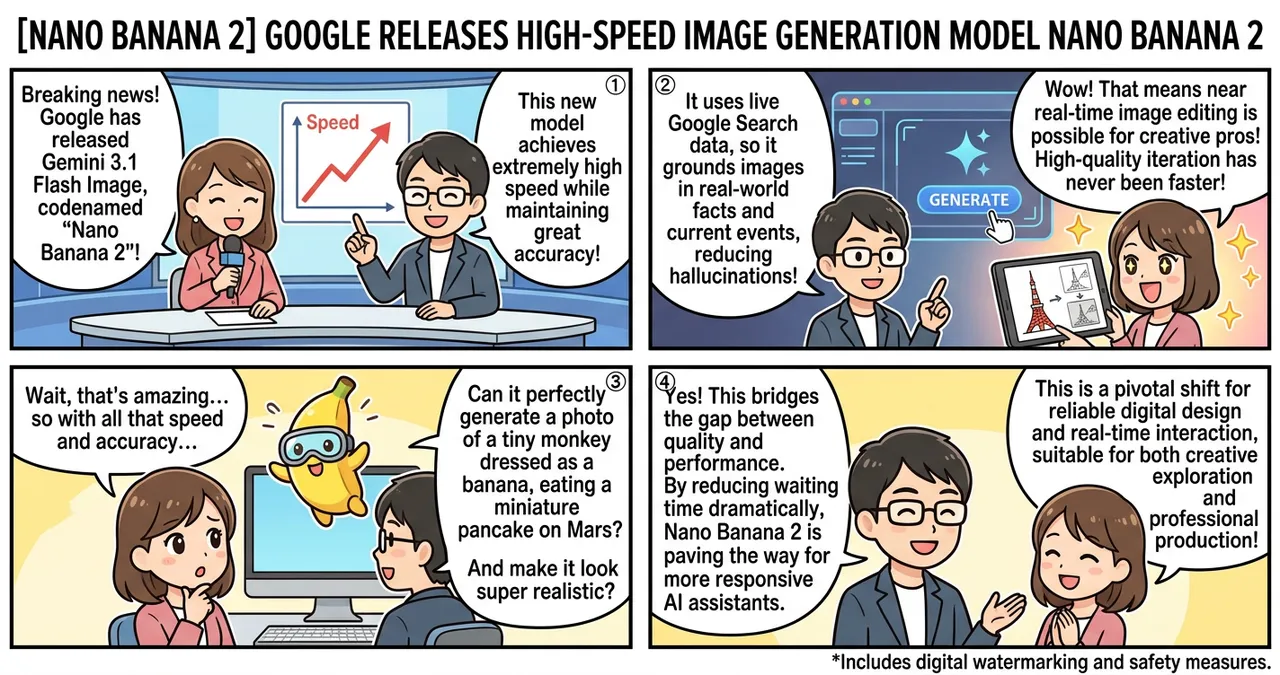

- Nano Banana 2 (Gemini 3.1 Flash Image) achieves a unique balance between high-fidelity output and rapid processing speeds.

- The model integrates live Google Search data to ground visual depictions in real-world facts and current information.

- Enhanced processing efficiency allows for near real-time image editing, significantly reducing the feedback loop for creative professionals.

Detailed Breakdown

The Evolution of the Flash Series

Google has officially introduced Gemini 3.1 Flash Image, colloquially known as Nano Banana 2. This model represents the latest iteration in the “Flash” lineage, which prioritizes low latency and cost-efficiency. While previous high-speed models often sacrificed fine details or complex prompt adherence, Nano Banana 2 utilizes a refined architecture that preserves the nuance typically found in larger “Pro” models.

Real-World Grounding via Search Integration

A distinguishing feature of this model is its deep integration with Google Search. When a user requests an image involving specific landmarks, current events, or niche products, the model can reference the latest web data to ensure accuracy. This reduces visual “hallucinations” where a model might otherwise create generic or outdated representations of real-world entities.

Interactive and Iterative Editing

The speed of Nano Banana 2 facilitates a shift from static generation to interactive design. Users can modify specific elements of an image—such as lighting, object placement, or textures—with almost instantaneous results. This capability positions the model not just as a content creator, but as a responsive tool for UI/UX designers and digital artists who require rapid iteration.

Why Is This Significant?

The release of Nano Banana 2 addresses the long-standing trade-off between quality and performance. In the past, developers had to choose between slow, high-quality models for final renders and fast, lower-quality models for prototyping.

| Feature | Gemini 3.0 Pro | Gemini 3.1 Flash (Nano Banana 2) |

|---|---|---|

| Generation Speed | Moderate | Very High |

| Accuracy | High | High (Search-Enhanced) |

| Resource Intensity | High | Low |

| Primary Use Case | Final Production | Real-time Apps / Iterative Design |

By bridging this gap, Google enables a wider range of applications, particularly in mobile and web environments where user patience is limited. This advancement mirrors recent developments in mobile autonomy, such as Gemini’s Android Agent Feature: Your Smartphone Now Completes Tasks Autonomously, where speed and contextual awareness are paramount for a seamless user experience.

Impact on the Tech Industry

The introduction of Nano Banana 2 is expected to accelerate the adoption of visual synthesis in enterprise workflows. For software engineers, the model’s efficiency means lower API costs and reduced server overhead, making it more feasible to integrate high-quality imaging into consumer-facing applications.

Furthermore, the “Search-grounded” approach sets a new standard for accuracy in the industry. Competitors may be forced to find similar ways to anchor their models to live data, moving the industry away from closed-dataset training toward dynamic, web-aware systems.

Points to Consider

While the speed and accuracy of Nano Banana 2 are impressive, several factors remain for users to monitor:

- Connectivity Requirements: Because the model relies on Google Search for real-world grounding, its performance may vary based on network stability and data availability.

- Safety and Ethics: Rapid generation tools require robust filtering to prevent the misuse of the technology for creating misleading content. Google has implemented digital watermarking, but the efficacy of these measures in a high-speed context is ongoing.

- Computational Thresholds: While more efficient than its predecessors, real-time editing still demands significant backend resources when scaled to millions of concurrent users.

Try It Yourself

- Access the API: Developers can find the Gemini 3.1 Flash Image endpoints through Google AI Studio or Vertex AI.

- Test Grounding: Provide a prompt involving a recent news event or a specific local landmark to see how the Search integration influences the output.

- Experiment with In-painting: Use the model’s editing features to modify existing images, testing the limits of its real-time processing capabilities.

Summary

Nano Banana 2 marks a pivotal shift toward high-performance, factually grounded image synthesis. By combining the speed of the Flash series with the precision of the Pro series, Google has created a tool suitable for both creative exploration and professional production. The integration of live search data suggests that the future of visual modeling lies in its ability to understand and reflect the world in real-time.

Why It Matters

This technology moves image generation from a “wait-and-see” process to a “live-interaction” experience. For the AI industry, it proves that speed does not have to come at the cost of factual accuracy, paving the way for more reliable and responsive digital assistants and design tools.

Primary Sources

Glossary

- Flash Architecture: A streamlined model design optimized for high-speed processing and low computational overhead.

- Grounding: The process of linking model outputs to verifiable, real-world data sources to increase accuracy.

- Latency: The delay between a user’s input and the system’s response; lower latency results in a “faster” feel.

![[Gemini 3.1 Flash-Lite] Google’s New Ultra-Fast, Low-Cost Model](https://4koma-news.com/images/posts/en/85-2026-03-03-google-gemini-flash-lite-blog.webp)

![[Weekly AI Model Updates] Top 5 News to Watch (3/1 - 3/8)](https://4koma-news.com/images/posts/en/88-weekly-ai-news-model-2026-03-08-blog.webp)

![[Gemini Lawsuit] Google Faces Wrongful Death Legal Action Over Chatbot Interactions](https://4koma-news.com/images/posts/en/87-2026-03-04-google-gemini-wrongful-death-blog.webp)