Key Takeaways

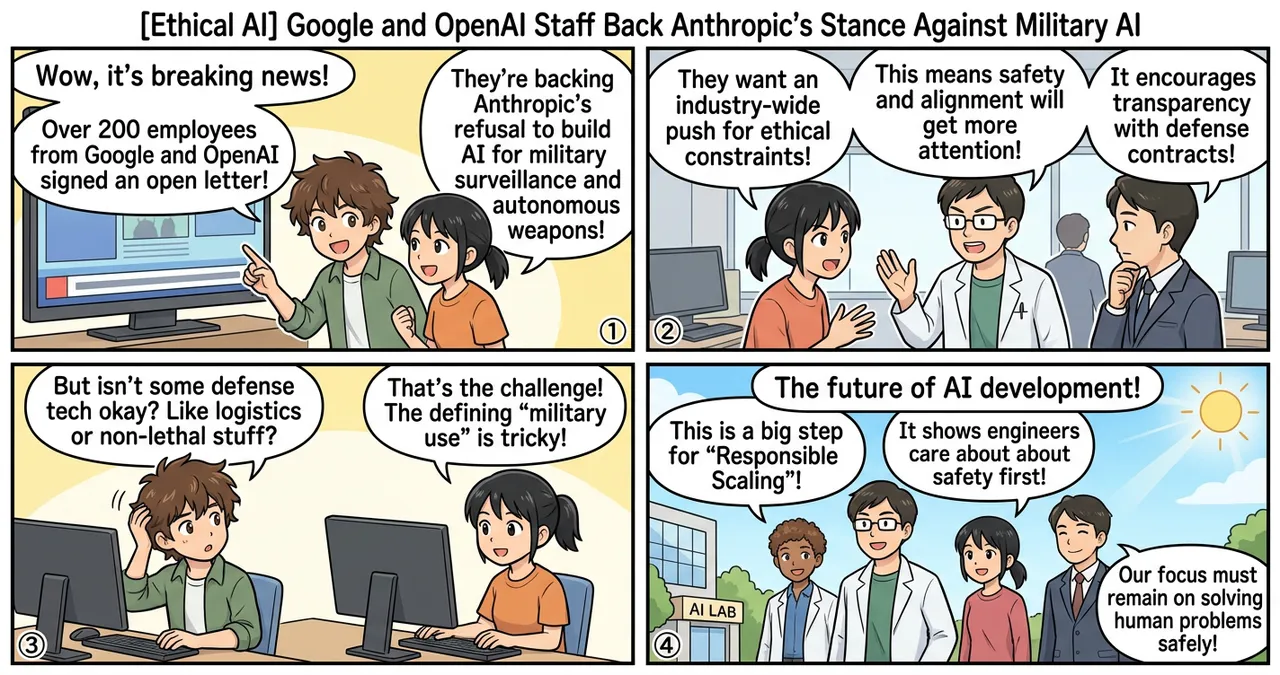

- Over 200 employees from major tech firms including Google and OpenAI have signed an open letter advocating for ethical constraints on AI.

- The letter specifically supports Anthropic’s public refusal to participate in the development of AI for military surveillance or autonomous weaponry.

- Signatories are calling for a unified industry front to resist pressure from defense departments regarding the militarization of artificial intelligence.

Detailed Breakdown

The Emergence of the Open Letter

A coalition of tech workers, primarily from Google and OpenAI, recently published an open letter addressing the intersection of artificial intelligence and national defense. This collective action stems from growing internal concerns regarding how foundational models are being integrated into military operations. The signatories represent a significant cross-section of the AI workforce, signaling that ethical considerations are becoming a priority for those building the technology.

Anthropic’s Stance as a Benchmark

The letter highlights Anthropic’s specific policy of declining contracts related to military surveillance and the creation of autonomous lethal systems. Anthropic, structured as a Public Benefit Corporation, has historically emphasized AI safety and alignment. By publicly backing this stance, employees at competing firms are pressuring their own leadership to adopt similar red lines, suggesting that safety protocols should not be sacrificed for lucrative government contracts.

Demands for Transparency and Accountability

The signatories urge tech leaders to be more transparent about their dealings with defense agencies. They argue that without clear, enforceable boundaries, AI development could inadvertently contribute to global instability. The letter suggests that tech companies should collaborate to set industry-wide standards rather than competing to fulfill defense requirements that may violate their core ethical principles.

Why Is This Significant?

The significance lies in the shift from individual corporate policy to a cross-industry labor movement. Historically, defense contracts have been a primary revenue driver for large tech entities. However, the unique risks associated with generative AI—such as hallucinations or unpredictable behavior—make its application in high-stakes military environments particularly controversial.

| Feature | Traditional Defense Tech | Proposed Ethical AI Framework |

|---|---|---|

| Primary Goal | Operational superiority and efficiency | Safety, alignment, and harm reduction |

| Governance | Government-led specifications | Independent safety audits and PBC status |

| Transparency | Classified or highly restricted | Publicly documented “red lines” |

| Risk Focus | Physical failure or interception | Algorithmic bias and autonomous escalation |

This movement represents a push for “Responsible Scaling,” where the speed of deployment is secondary to the assurance that the technology cannot be weaponized without oversight.

Impact on the Tech Industry

For engineers and developers worldwide, this development indicates that ethical alignment is becoming a key factor in talent retention. Top-tier AI researchers are increasingly choosing employers based on their commitment to safety. If major players like Google and OpenAI face internal pressure to reject certain military projects, it may lead to a bifurcation of the industry: firms that prioritize defense and those that prioritize “civilian-first” development.

Furthermore, this could force a re-evaluation of how “dual-use” technology—AI that can be used for both civilian and military purposes—is licensed and monitored. Companies may need to implement more robust end-user license agreements (EULAs) to prevent their models from being integrated into combat systems via third-party developers.

Points to Consider

While the open letter advocates for a noble cause, several complexities remain. Defining what constitutes “military use” is difficult; for example, using AI for logistics or administrative tasks within a defense department is vastly different from using it for targeting systems. There is also the concern that if Western companies unilaterally retreat from military AI development, it may create a strategic vacuum that is filled by entities with fewer ethical constraints.

Additionally, the financial implications are substantial. Defense spending on AI is projected to grow significantly over the next decade. Companies that strictly adhere to the signatories’ demands may face challenges from shareholders who prioritize market growth and government partnerships.

Try It Yourself

To better understand the nuances of this debate and the current state of AI ethics, consider the following steps:

- Review the Letter: Search for the full text of the open letter to see the specific language used by the signatories and the list of demands.

- Research Corporate Structures: Investigate the difference between a standard C-Corp and a Public Benefit Corporation (PBC) to understand why Anthropic’s legal structure allows for different ethical choices.

- Explore AI Safety Frameworks: Read the “Responsible Scaling Policies” published by Anthropic and OpenAI to compare how they define risk levels.

Summary

The collective support from Google and OpenAI employees for Anthropic’s anti-militarization stance marks a pivotal moment in the AI industry’s evolution. It reflects a growing consensus among workers that the ethical deployment of AI must take precedence over defense-related expansion. As the boundary between civilian and military technology continues to blur, the industry’s ability to maintain a unified ethical front will likely determine the long-term societal impact of artificial intelligence.

Why It Matters

This news highlights a critical tension between rapid technological advancement and the ethical responsibilities of the creators. The outcome of this movement could dictate whether AI becomes a tool for global surveillance and warfare or remains focused on solving complex human problems in a safe, controlled manner.

Primary Sources

Glossary

- Public Benefit Corporation (PBC): A type of for-profit corporate entity that includes positive impact on society and the environment as part of its legally defined goals.

- Autonomous Weapons: Systems capable of selecting and engaging targets without direct human intervention or control.

- Responsible Scaling Policy (RSP): A framework adopted by AI labs to ensure that as their models become more powerful, safety measures and oversight increase proportionally.

![[Claude] Anthropic's App Surpasses ChatGPT in US App Store Rankings](https://4koma-news.com/images/posts/en/82-2026-03-01-claude-tops-app-store-blog.webp)

![[Weekly AI Industry & Trends] Top 5 News to Watch (3/1 - 3/8)](https://4koma-news.com/images/posts/en/89-weekly-ai-news-other-2026-03-08-blog.webp)

![[Weekly AI Model Updates] Top 5 News to Watch (3/1 - 3/8)](https://4koma-news.com/images/posts/en/88-weekly-ai-news-model-2026-03-08-blog.webp)

![[OpenAI] OpenAI to Deploy AI Models on Pentagon's Classified Networks](https://4koma-news.com/images/posts/en/73-2026-02-28-openai-dod-ai-models-blog.webp)