Key Takeaways

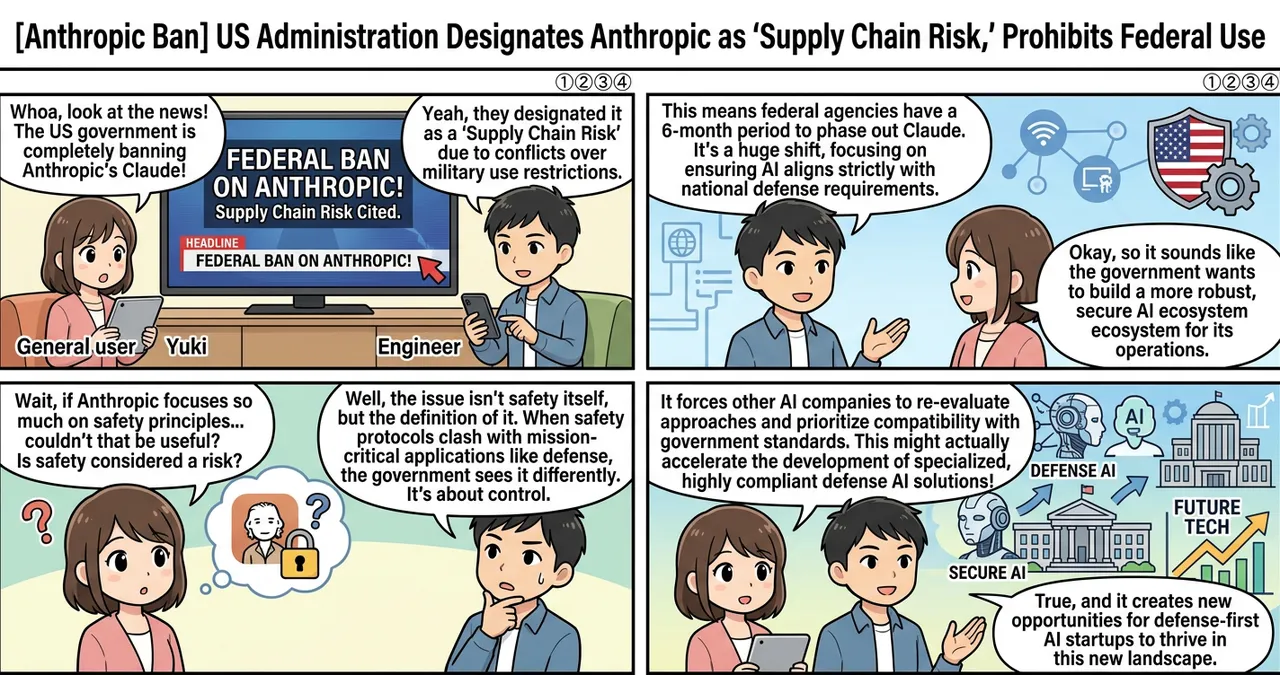

- Immediate suspension of all federal use and procurement of Anthropic’s AI products, including the Claude series of models.

- Designation of Anthropic as a “supply chain risk," a label typically reserved for entities deemed threats to national security.

- A mandatory six-month phase-out period for all existing government contracts and integrations involving Anthropic technology.

Detailed Breakdown

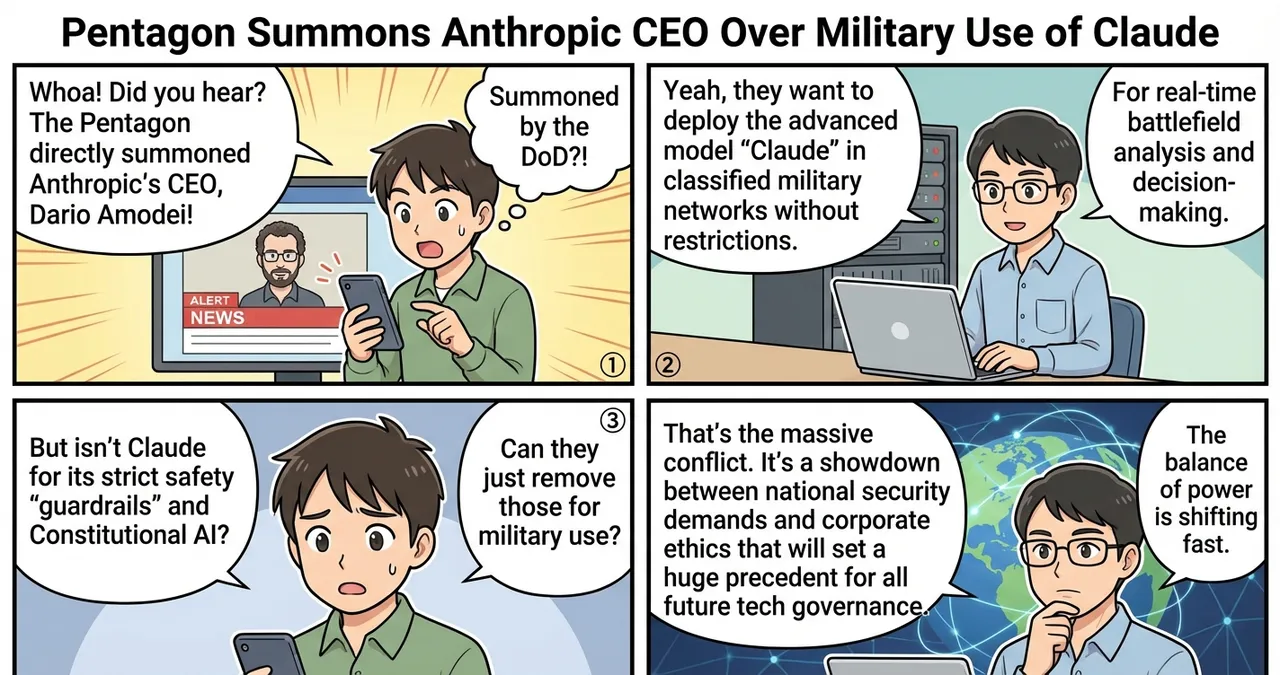

The Catalyst: Military Use Restrictions

The friction between the executive branch and Anthropic reached a breaking point over the company’s “Responsible Use Policy.” Anthropic has historically maintained strict guidelines regarding the deployment of its models in lethal autonomous weapons systems and direct combat operations. President Trump and Defense Secretary Pete Hegseth identified these restrictions as an impediment to national defense capabilities, leading to the dramatic escalation in policy.

The “Supply Chain Risk” Designation

By designating a domestic AI firm as a supply chain risk, the administration has utilized authorities usually applied to foreign telecommunications companies. This classification suggests that the government views the refusal to align with military requirements as a vulnerability. The order prohibits any federal agency from entering into new contracts with Anthropic and mandates a complete removal of their software from government infrastructure by the end of the third quarter of 2026.

Anthropic’s Response and Legal Strategy

Anthropic has signaled its intent to challenge the designation in court. The company argues that its safety protocols are essential for preventing the misuse of powerful AI and that the “supply chain risk” label is being applied arbitrarily. Legal experts suggest the case could center on whether the government can compel a private company to modify its core safety architectures for military purposes.

Why Is This Significant?

This move represents a fundamental shift in how the US government interacts with the domestic AI industry. Previously, the focus was on incentivizing innovation; now, the focus has expanded to include mandatory alignment with defense priorities.

| Feature | Previous Approach | New Policy (Post-Ban) |

|---|---|---|

| Vendor Status | Preferred partner for safety-first AI | Designated “Supply Chain Risk” |

| Compliance | Voluntary safety commitments | Mandatory military utility requirements |

| Procurement | Merit-based based on performance | Contingent on “national security alignment” |

| Implementation | Gradual integration across agencies | Immediate halt and 6-month removal |

The technical significance lies in the rejection of “Constitutional AI”—Anthropic’s method of training models via a set of ethical principles—when those principles conflict with state-defined operational needs.

Impact on the Tech Industry

The ban sends a powerful signal to the broader tech ecosystem. AI developers must now weigh the benefits of federal contracts against the potential for executive intervention if their ethical frameworks clash with government objectives.

- Model Alignment: Other major AI labs, such as OpenAI and Google, may face increased pressure to demonstrate “military readiness” to avoid similar designations.

- Venture Capital: Investors may become wary of firms that prioritize safety over government compatibility, potentially shifting the funding landscape toward “defense-first” AI startups.

- Global Competition: This internal domestic conflict could inadvertently slow down the integration of advanced AI within the US civil service, potentially allowing international competitors to close the gap in administrative efficiency.

Points to Consider

While the administration cites national security, the move carries several practical and legal complexities. The definition of “supply chain risk” is being tested in a new context, and the outcome of Anthropic’s legal challenge will set a major precedent for the industry. Furthermore, many federal agencies currently rely on Claude for data analysis and administrative automation; transitioning these systems within a six-month window presents a significant technical and logistical hurdle for IT departments.

Try It Yourself

For organizations or individuals tracking these developments, the following steps are recommended:

- Audit AI Dependencies: Review your organization’s software stack to identify any tools or third-party services that rely on Anthropic’s API.

- Develop a Multi-LLM Strategy: To mitigate the risk of sudden policy shifts, ensure your infrastructure is “model-agnostic,” allowing you to switch between different AI providers (e.g., OpenAI, Meta, or open-source models) if necessary.

- Monitor Federal Procurement Data: Keep a close watch on the “System for Award Management” (SAM.gov) for updates on prohibited vendors and new compliance requirements for government contractors.

Summary

The Trump administration’s decision to ban Anthropic and label it a supply chain risk marks a historic confrontation between the federal government and the AI industry. By prioritizing military utility over the developer’s safety constraints, the government is redefining the boundaries of private-sector cooperation in national defense. The upcoming legal battle and the mandatory phase-out will likely determine the future trajectory of AI regulation and ethical development in the United States.

Why It Matters

This news is a watershed moment for the AI industry, signaling that technical safety frameworks are no longer purely a matter of corporate policy but are now subject to national security mandates. It forces a re-evaluation of how domestic technology firms balance ethical “alignment” with the operational demands of the state.

Primary Sources

Glossary

- Supply Chain Risk: A designation indicating that a product or company poses a threat to the security or integrity of the government’s operational infrastructure.

- Constitutional AI: A method developed by Anthropic to train AI models to follow a specific set of rules or “principles” to ensure safety and ethical behavior.

- Federal Procurement: The process by which government agencies acquire goods and services from the private sector.

![[Anthropic Ban] US Military Reportedly Uses Anthropic AI for Airstrikes Hours After Executive Ban](https://4koma-news.com/images/posts/en/79-2026-03-01-anthropic-us-airstrike-blog.webp)

![[Weekly AI Industry & Trends] Top 5 News to Watch (3/1 - 3/8)](https://4koma-news.com/images/posts/en/89-weekly-ai-news-other-2026-03-08-blog.webp)

![[Weekly AI Model Updates] Top 5 News to Watch (2/22 - 3/1)](https://4koma-news.com/images/posts/en/80-weekly-ai-news-model-2026-03-01-blog.webp)