Key Takeaways

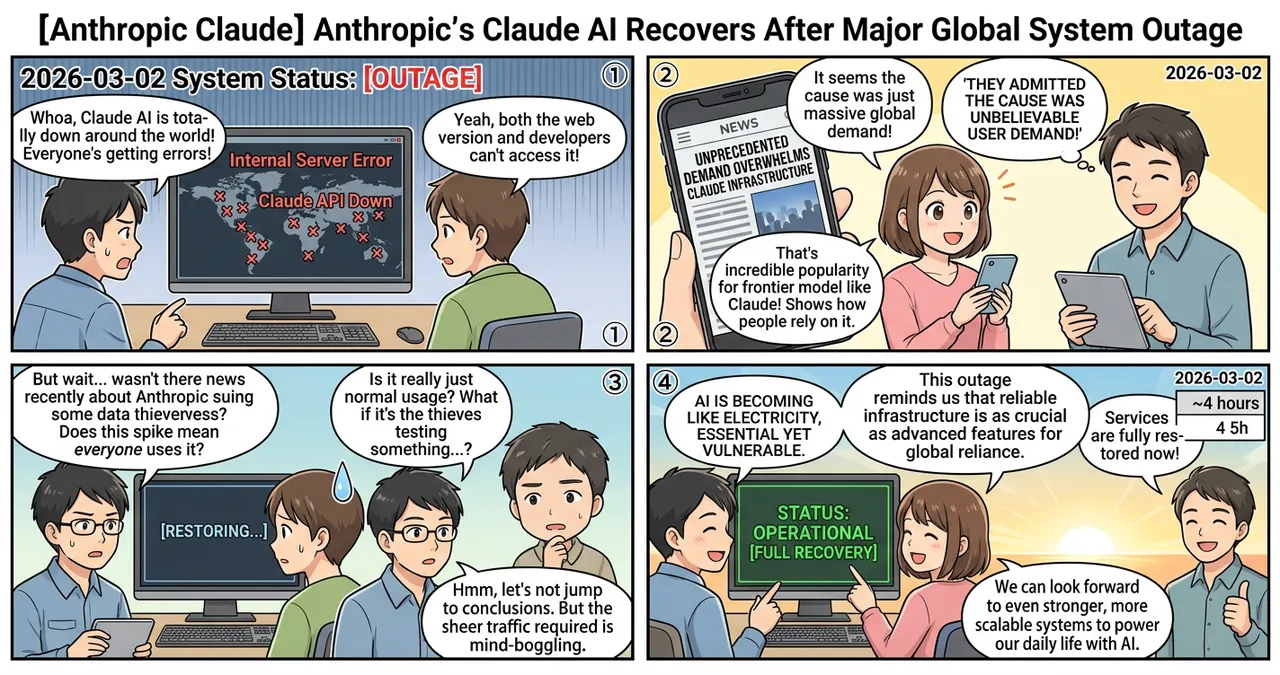

- Global service disruption affected both the Claude web interface and the developer API for several hours on the morning of March 2, 2026.

- Anthropic identified the root cause as “unbelievable demand," suggesting that infrastructure capacity was momentarily overwhelmed by a surge in traffic.

- All services were fully restored within hours, but the event highlights the ongoing challenges of scaling frontier AI models for a global user base.

Detailed Breakdown

Timeline of the Outage

On the morning of March 2, 2026, users worldwide began reporting “Internal Server Error” messages and timeout issues when attempting to access Claude.ai. The disruption was not limited to the consumer-facing web portal; developers utilizing Anthropic’s API also reported total service failures. Anthropic’s official status page acknowledged the issue shortly after the initial reports, confirming that the team was investigating widespread connectivity problems.

Root Cause: Scaling Limits

Anthropic officially attributed the downtime to an unexpected and massive surge in demand. While the company did not specify whether this was driven by a specific new feature release or a coordinated external event, the scale of the “unbelievable demand” suggests that the current infrastructure reached a critical threshold. This incident occurs amid a period of heightened scrutiny for the company, following recent legal challenges regarding data usage, such as when Anthropic Sues Three Chinese AI Firms Over 16 Million Data Points Stolen From Claude.

Restoration and Recovery

Following several hours of intermittent availability, Anthropic engineers successfully stabilized the environment. By early afternoon, the company announced that all systems were operational. Post-recovery observations indicated that message history and API configurations remained intact, suggesting the outage was strictly a traffic-handling failure rather than a data integrity issue.

Why Is This Significant?

The stability of frontier AI models is becoming a matter of global economic importance. As these tools move from experimental toys to core business infrastructure, downtime results in immediate productivity losses.

| Metric | Claude Outage (March 2026) | Typical Industry Standard |

|---|---|---|

| Duration | Approximately 3-4 hours | 99.9% Uptime (SLA) |

| Scope | Web, Mobile, and API | Varies by incident |

| Primary Cause | Infrastructure Capacity (Demand) | Software bugs or maintenance |

| User Impact | Global | Regional or localized |

The technical significance lies in the difficulty of load balancing for Large Language Models (LLMs). Unlike traditional web services, LLM inference requires massive, specialized GPU clusters that cannot always be scaled horizontally at a moment’s notice.

Impact on the Tech Industry

For engineers and enterprises, this outage serves as a reminder of the risks associated with “single-model dependency.” Companies that have integrated Claude into their automated workflows found their operations halted. This is particularly relevant for those utilizing advanced agentic workflows, such as Claude Code’s New ‘Remote Control’ Feature: Continue Development From Any Device, which relies on persistent connectivity to maintain development environments.

Furthermore, the incident may accelerate the trend toward multi-model redundancy. Organizations are increasingly likely to implement “failover” systems that automatically switch to alternative models (such as GPT or Gemini) if their primary provider experiences a disruption.

Points to Consider

While Anthropic resolved the issue quickly, several challenges remain for the industry:

- Infrastructure Elasticity: The ability to handle sudden 10x or 100x traffic spikes remains a bottleneck for high-end AI providers.

- Centralization Risks: As more critical infrastructure depends on a handful of AI companies, a single point of failure can have cascading effects on the global economy.

- Transparency: While “high demand” is a plausible explanation, the lack of granular technical detail in public post-mortems can make it difficult for enterprise partners to perform their own risk assessments.

Try It Yourself

To minimize the impact of future AI service disruptions, consider the following steps:

- Monitor Official Status Pages: Bookmark the Anthropic Status Page to receive real-time updates during incidents.

- Implement Multi-Model APIs: Use abstraction layers or libraries that allow your application to switch between different AI providers (e.g., using LiteLLM or LangChain).

- Check Local Connectivity: If you encounter an error, verify it is a global issue by checking third-party monitoring sites like Downdetector before troubleshooting your own code.

- Develop Offline Fallbacks: For critical tasks, ensure there is a non-AI or local-model fallback to maintain basic functionality during cloud outages.

Summary

The global outage of Anthropic’s Claude on March 2, 2026, underscored the growing pains of a rapidly expanding AI ecosystem. While the service was restored within hours, the event highlighted the fragility of relying on a single provider for critical infrastructure. As demand for frontier models continues to climb, the industry must focus on building more resilient and scalable systems to prevent similar disruptions in the future.

Why It Matters

This news signals that even the most advanced AI companies are struggling to keep pace with the exponential growth in user adoption. For society, it emphasizes that AI is no longer a luxury but a utility that requires the same level of reliability as electricity or the internet.

Primary Sources

- Mashable: Anthropic’s Claude AI is back up after a brief outage

- Anthropic Official Status Page (Historical Reference)

Glossary

- API (Application Programming Interface): A set of rules that allows different software applications to communicate with each other; in this context, it allows developers to plug Claude’s intelligence into their own apps.

- Frontier Model: A term used to describe the most advanced, large-scale AI models that represent the current state-of-the-art in the industry.

- Load Balancing: The process of distributing network traffic across multiple servers to ensure no single server becomes overwhelmed, which helps maintain service stability.

- Inference: The process of an AI model generating an output (like text or code) based on the input it receives from a user.

![[Weekly AI Model Updates] Top 5 News to Watch (2/22 - 3/1)](https://4koma-news.com/images/posts/en/80-weekly-ai-news-model-2026-03-01-blog.webp)

![[Weekly AI Model Updates] Top 5 News to Watch (3/1 - 3/8)](https://4koma-news.com/images/posts/en/88-weekly-ai-news-model-2026-03-08-blog.webp)

![[Claude] Anthropic's App Surpasses ChatGPT in US App Store Rankings](https://4koma-news.com/images/posts/en/82-2026-03-01-claude-tops-app-store-blog.webp)