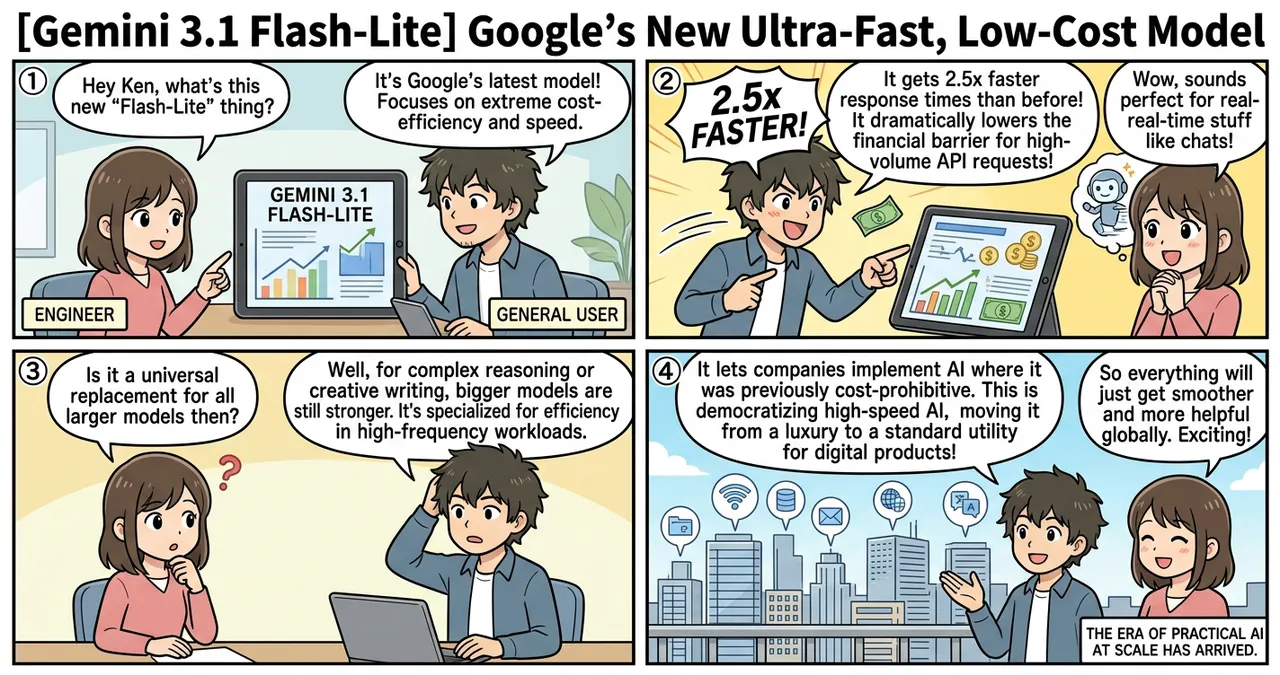

Google has announced the release of Gemini 3.1 Flash-Lite, the latest addition to the Gemini 3 series. This model is specifically engineered to provide the highest level of cost-efficiency and speed within the current lineup. As AI integration becomes standard across global industries, the demand for models that can handle massive workloads without prohibitive costs has reached a critical point. Gemini 3.1 Flash-Lite addresses this by prioritizing low latency and high throughput for developer-centric tasks.

Key Takeaways

- 2.5x faster response times compared to the previous Gemini 2.5 Flash model, significantly reducing latency for end-users.

- Industry-leading cost-efficiency, designed to lower the financial barrier for high-volume API requests and large-scale deployments.

- Optimized for high-frequency workloads, making it ideal for real-time applications such as chat interfaces and automated data processing.

Detailed Breakdown

Optimized Performance Architecture

Gemini 3.1 Flash-Lite is built upon the foundational advancements of the Gemini 3 series but features a streamlined architecture. By refining the model’s internal parameters, Google has managed to reduce the computational overhead required for inference. This results in a model that can process information and generate responses at speeds far exceeding its predecessors.

Focus on Developer Workloads

The “Lite” designation indicates a focus on specific developer needs rather than general-purpose reasoning. While the model maintains the core capabilities of the Gemini family, it is fine-tuned for tasks where speed is more critical than deep, multi-step logical deduction. This includes tasks like summarization, categorization, and simple conversational responses where the delay of a larger model would degrade the user experience.

Why Is This Significant?

The release of Gemini 3.1 Flash-Lite marks a shift in the AI landscape from “bigger is better” to “efficiency is essential.” For many enterprise applications, the bottleneck is no longer the model’s intelligence, but the cost and time required to execute millions of small tasks.

| Feature | Gemini 2.5 Flash | Gemini 3.1 Flash-Lite | Improvement |

|---|---|---|---|

| Response Speed | 1.0x (Baseline) | 2.5x | 150% Increase |

| Cost per 1M Tokens | Standard | Reduced | Significant Savings |

| Primary Use Case | General Purpose | High-frequency API calls | Specialized Speed |

By offering a model that is substantially faster and cheaper, Google allows companies to implement AI in areas where it was previously cost-prohibitive, such as real-time translation for live feeds or massive-scale sentiment analysis of social media data.

Impact on the Tech Industry

For software engineers and technology companies, Gemini 3.1 Flash-Lite changes the economics of AI integration. Startups can now scale their services to millions of users with a significantly lower burn rate. Larger enterprises can replace traditional heuristic-based code with LLM-based logic without worrying about the latency spikes that typically accompany generative AI. This model effectively democratizes high-speed AI, moving it from a luxury feature to a standard utility for any digital product.

Points to Consider

While the speed and cost benefits are clear, Gemini 3.1 Flash-Lite is not a universal replacement for larger models. Users should be aware that the “Lite” version may have limitations in complex reasoning, creative writing, or highly nuanced instruction following compared to the standard Gemini 3.1 Pro. It is a specialized tool meant to complement, not replace, more robust models. Additionally, as with all high-speed models, ensuring output accuracy through rigorous testing remains a necessary step for developers.

Try It Yourself

- Access Google AI Studio: Log in to the developer console to select Gemini 3.1 Flash-Lite from the model dropdown menu.

- Update API Keys: Ensure your environment is configured to use the latest Gemini 3.1 endpoints.

- Run a Benchmark: Compare the latency of your existing prompts against the new Flash-Lite model to measure the 2.5x speed improvement in your specific workflow.

- Review Pricing: Check the Vertex AI or AI Studio pricing page to calculate the potential cost savings for your production environment.

Summary

Google’s Gemini 3.1 Flash-Lite represents a major step forward in making AI practical for large-scale, real-time applications. By delivering a 2.5x speed increase and superior cost-efficiency, it enables developers to build more responsive and affordable AI-driven tools. As the industry continues to mature, the focus on such optimized, task-specific models is likely to intensify.

Why It Matters

This release lowers the “intelligence tax” for developers, allowing for the widespread adoption of AI in low-latency environments. By making AI faster and cheaper, Google is facilitating a shift where generative models become an invisible, ubiquitous layer of the modern internet infrastructure.

Primary Sources

Glossary

- Throughput: The amount of data or number of tasks a system can process within a specific timeframe.

- Latency: The delay between a user’s request and the system’s response, usually measured in milliseconds.

- Token: The basic unit of text (such as a word or part of a word) that an AI model processes and generates.

- Inference: The process of an AI model using its trained knowledge to generate a response or prediction based on new input.

![[Nano Banana 2] Google Releases High-Speed Image Generation Model Nano Banana 2](https://4koma-news.com/images/posts/en/76-2026-03-01-google-nano-banana-2-blog.webp)

![[Weekly AI Model Updates] Top 5 News to Watch (3/1 - 3/8)](https://4koma-news.com/images/posts/en/88-weekly-ai-news-model-2026-03-08-blog.webp)

![[Gemini Lawsuit] Google Faces Wrongful Death Legal Action Over Chatbot Interactions](https://4koma-news.com/images/posts/en/87-2026-03-04-google-gemini-wrongful-death-blog.webp)

![[Weekly AI Model Updates] Top 5 News to Watch (2/22 - 3/1)](https://4koma-news.com/images/posts/en/80-weekly-ai-news-model-2026-03-01-blog.webp)